Teradata to BigQuery Migration: Challenges, Best Practices, and Practical Guide

We’re not going to sugarcoat it: Migrating from Teradata to BigQuery is complex. Don’t worry—our guide will simplify and streamline this process for you. We’ll illuminate the path, highlighting the key contrasts between Teradata’s traditional on-premises system and Google BigQuery’s innovative cloud-based platform. We’ll explore these differences in depth, focusing on storage solutions, data processing capabilities, and scalability options.

We’ll also address critical business topics, ensuring you make a smooth transition to the cloud. And no cloud database migration guide would be complete without guidance to secure stakeholder buy-in, optimize your budget, and minimize operational disruptions. You’ll also get practical tips to make this migration easy, giving you everything you need to prove you did it right. (Pre-emptive high five!)

Lastly, Datafold’s powerful AI-driven migration approach makes it faster, more accurate, and more cost-effective than traditional methods. With automated SQL translation and data validation, Datafold minimizes the strain on data teams and eliminates lengthy timelines and high costs typical of in-house or outsourced migrations.

This lets you complete full-cycle migration with precision–and often in a matter of weeks or months, not years–so your team can focus on delivering high-quality data to the business. If you’d like to learn more about the Datafold Migration Agent, please read about it here.

Common Teradata to BigQuery migration challenges

When data engineers move their data from Teradata to BigQuery, they usually run into a few challenges. It takes a lot of detailed planning and careful work to make sure it’s done correctly. So, we’re going to dive into the stuff that usually throws these migrations off track: architecture and SQL dialects. When you understand how they’re different, you’ll have a better shot at a smooth transition. You’ll also see how these differences affect data distribution, query performance, and scalability.

Architecture differences

The architectural differences between Teradata and BigQuery are substantial, starting with the basics of how they work. Cloud-based platforms like BigQuery have very different approaches to storage and computation. Legacy platforms like Teradata have those two things all intertwined like spaghetti.

As you can imagine, going from an on-premise solution to a cloud-based solution is a big deal. Transitioning from physical infrastructure to virtual, service-oriented architecture requires a big shift in how you think about and manage data scalability, storage, and computing resources.

Teradata uses a Massively Parallel Processing (MPP) architecture, which spreads data across multiple nodes for concurrent processing. It closely integrates data storage with computational power, which is important to understand as you migrate to BigQuery’s distinct architecture.

Teradata’s architecture explained

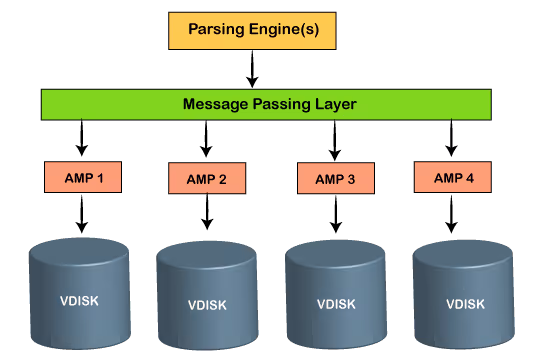

Teradata clusters are composed of a network of Access Module Processors (AMPs), which serve as the core drivers of its data processing prowess. Each AMP operates on an individual server instance, bearing a share of both computational and storage duties for the cluster. In this configuration, storage and compute resources are tightly coupled, with specific data segments being stored and processed on the same server. Run out of one or the other and the whole ship goes down. :(

This integrated approach is at the heart of how Teradata’s data management and query performance work. Similar to other Massively Parallel Processing (MPP) systems, such as AWS Redshift, Teradata uses a hashing algorithm to evenly distribute data across its AMPs.

However, this method presents several challenges in terms of scalability and performance:

- Reliance on hashing key for query efficiency: The effectiveness of queries heavily relies on their alignment with the hashing key. If joins and filters in a query do not correspond with the hashing key, it can lead to diminished performance due to the need for redistributing data across different AMPs.

- Scaling constraints: Expanding the system’s storage or computational power, necessary for handling more queries, involves adding more nodes. Scaling your database means scaling the entire infrastructure. Aside from being costly, adding nodes also necessitates redistributing data among both new and existing nodes. Although the recent introduction of Teradata VantageCloud offers compute-only clusters, these still rely on a Primary Cluster for storage, which can become a scalability bottleneck.

- Limited elasticity in compute demand: Teradata’s architecture isn’t very flexible when it comes to scaling for query processing. Adjusting the cluster’s available storage is a complex task, often leading to either underuse of resources or performance bottlenecks. It gets expensive quickly and isn’t ideal as an enterprise data warehouse.

BigQuery architecture explained

BigQuery’s architecture is cloud native and serverless. It separates compute and storage functions, making it more scalable and flexible, while providing efficient resource usage.

BigQuery’s standout feature is its fully-managed, serverless data warehouse. Unlike Teradata, it automatically scales. As a result, compute resources are dynamically allocated and adjusted based on the workload. During times of high usage, such as business hours when data analysts are running complex queries or generating data analytics reports, BigQuery automatically ramps up to handle the load and scales down during periods of lower activity. Cheap, fast, and easy! Sweet!

Its adaptability is perfectly suited to meet varying data processing requirements. BigQuery’s management is user-friendly and accessible (bonus!), offering intuitive controls through an API or a user interface. Even users with limited technical expertise can efficiently manage and interact with their data.

Benefits of migration to BigQuery

Embracing BigQuery as your data warehousing solution has many advantages, like operational efficiencies and enhanced data processing capabilities. Here are just a few big benefits:

- Reduced DevOps effort: Transitioning to BigQuery significantly eases the burden associated with DevOps tasks. And thank goodness, because DevOps people are always super busy. BigQuery simplifies maintenance and optimization. Its serverless nature means spending less time on maintenance and tuning.

- Shift to ELT (Extract, Load, Transform) approach: With Teradata, you typically need to filter and transform your data before you load it (ETL vs. ELT). This is how pipelines were designed pre-cloud because storage and compute were limited and not dynamically scalable. But BigQuery, with its cloud native architecture, supports a more efficient ELT model. Data can be loaded in its raw form and transformed on its way out of BigQuery as needed, giving data analysts more flexibility.

Simplified data management and flexibility: Efficient data management in a Teradata data warehouse requires careful planning of table layouts, indices, and data distribution strategies. BigQuery automates most of this complexity. While it does table partitioning and clustering for performance optimization, it doesn’t require intricate setup and planning that Teradata does. This streamlined approach makes it easier to organize and manage data.